[ad_1]

:::info

This paper is available on arxiv under CC 4.0 license.

Authors:

(1) Amir Noorizadegan, Department of Civil Engineering, National Taiwan University;

(2) D.L. Young, Core Tech System Co. Ltd, Moldex3D, Department of Civil Engineering, National Taiwan University & dlyoung@ntu.edu.tw;

(3) Y.C. Hon, Department of Mathematics, City University of Hong Kong;

(4) C.S. Chen, Department of Civil Engineering, National Taiwan University & dchen@ntu.edu.tw.

:::

Table of Links

PINN for Solving Inverse Burgers’ Equation

Results, Acknowledgments & References

2 Neural Networks

In this section, we will explore the utilization of feedforward neural networks for solving interpolation problems, specifically focusing on constructing accurate approximations of functions based on given data points.

2.1 Feedforward Neural Networks

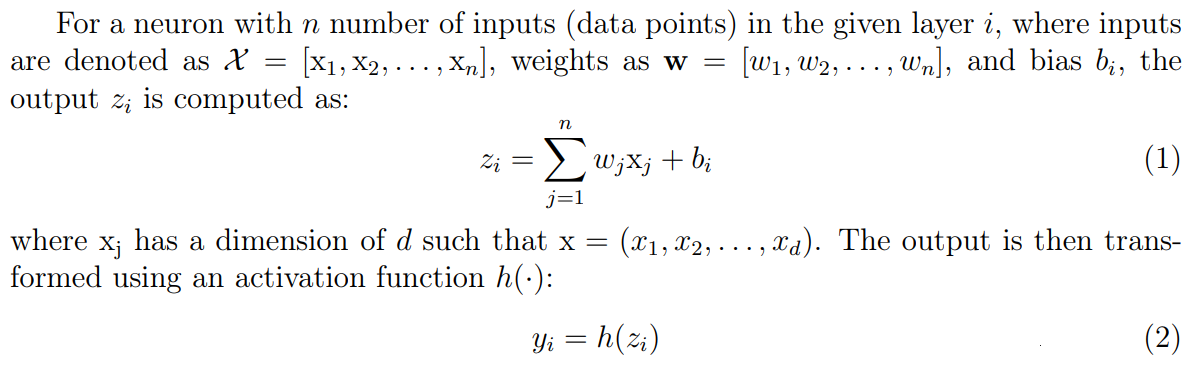

The feedforward neural network, also known as a multilayer perceptron (MLP), serves as a foundational architecture in artificial neural networks. Comprising interconnected layers of neurons, the information flow progresses unidirectionally from the input layer through hidden layers to the output layer. This process, termed “feedforward,” entails transforming input data into desired output predictions. The core constituents of a feedforward neural network are its individual neurons. A neuron computes a weighted sum of its inputs, augmented by a bias term, before applying an activation function to the result.

\

\

It is important to note that while hidden layers utilize activation functions to introduce non-linearity, the last layer (output layer) typically does not apply an activation function to its outputs.

2.2 Neural Networks for Interpolation

In the context of interpolation problems, feedforward neural networks can be leveraged to approximate functions based on a given set of data points. The primary objective is to construct a neural network capable of accurately predicting function values at points not explicitly included in the provided dataset.

2.2.1 Training Process

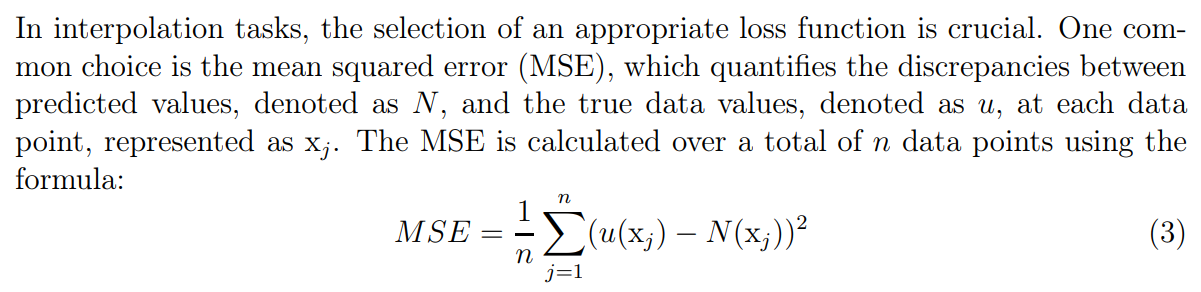

The training of the neural network involves adjusting its weights and biases to minimize the disparity between predicted outputs and actual data values. This optimization process is typically driven by algorithms such as gradient descent, which iteratively update network parameters to minimize a chosen loss function.

2.2.2 Loss Function for Interpolation

\

This loss function guides the optimization process, steering the network toward producing accurate predictions.

[ad_2]

Source link